AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Logistic regression jmp8/5/2023

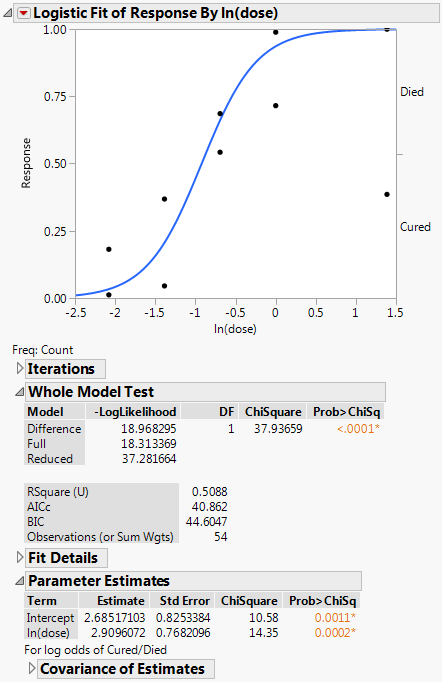

While several scholars have argued that mixed methods may help to improve sociological explanations, there is a lack of highly visible examples that show the added value of this methodology. The question of how and with what methods the social sciences should explain phenomena is fiercely contested. Findings of this study showed that some passenger groups were more likely to survive than others, with respect to certain demographic characteristic and whether the passenger was traveling in the first, second or third class. Secondary data was used as the main data collection tool and it was analyzed by fitting a logistic regression model using a statistical package, SPSS. The main aim of this research is to identify the Impact of gender, passenger class, Accompany, age on a person?s likelihood of surviving the shipwreck. However there are many reasons that the shipwreck led to such loss of life and there was some elements of luck involved in surviving the sinking as some groups of people were more likely to survive than others. This sensational tragedy shocked the international community and motivated the adoption of better maritime safety regulations. On April 15, 1912, during her maiden voyage, the Titanic sank after colliding with an iceberg, killing 1,502 of the 2,228 passengers and crew. The most basic diagnostic of a logistic regression is predictive accuracy.The sinking of Titanic is one of the most infamous shipwrecks in history.

To understand this we need to look at the prediction-accuracy table (also known as the classification table, hit-miss table, and confusion matrix).

The table below shows the prediction-accuracy table produced by Displayr's logistic regression. At the base of the table you can see the percentage of correct predictions is 79.05%. This tells us that for the 3,522 observations (people) used in the model, the model correctly predicted whether or not somebody churned 79.05% of the time. Is this a good result? The answer depends a bit on context. In this case 79.05% is not quite as good as it might sound. Starting with the No row of the table, we can see that the there were 2,301 people who did not churn and were correctly predicted not to have churned, whereas only 274 people who did not churn were predicted to have churned. If you hover your mouse over each of the cells of the table you see additional information, which computes a percentage telling us that the model accurately predicted non-churn for 83% of those that did not churn. It shows us that among people who did churn, the model was only marginally more likely to predict they churned than did not churn (i.e., 483 versus 464). So, among people who did churn, the model only correctly predicts that they churned 51% of the time. If you sum up the totals of the first row, you can see that 2,575 people did not churn. However, if you sum up the first column, you can see that the model has predicted that 2,765 people did not churn. What's going on here? As most people did not churn, the model is able to get some easy wins by defaulting to predicting that people do not churn. There is nothing wrong with the model doing this. But, it is important to keep this in mind when evaluating the accuracy of any predictive model. If the groups being predicted are not of equal size, the model can get away with just predicting people are in the larger category, so it is always important to check the accuracy separately for each of the groups being predicted (i.e., in this case, churners and non-churners).

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed